Mining server log file data has helped research community to design and develop algorithms for predicting such anomalies, either manually using statistical models or using machine learning techniques. Website failures occur for many reasons, such as: (i) page not found (ii) website down for maintenance (iii) user request to server timed out, i.e., could not be processed within specified time limit (iv) system ran out of memory (v) user login is invalid due to incorrect login name or password or other password related issues and (vi) location of the page that user tries to access has changed. Web applications continue to change dynamically meeting user expectations and hence failures do happen, unplanned or sometimes planned.

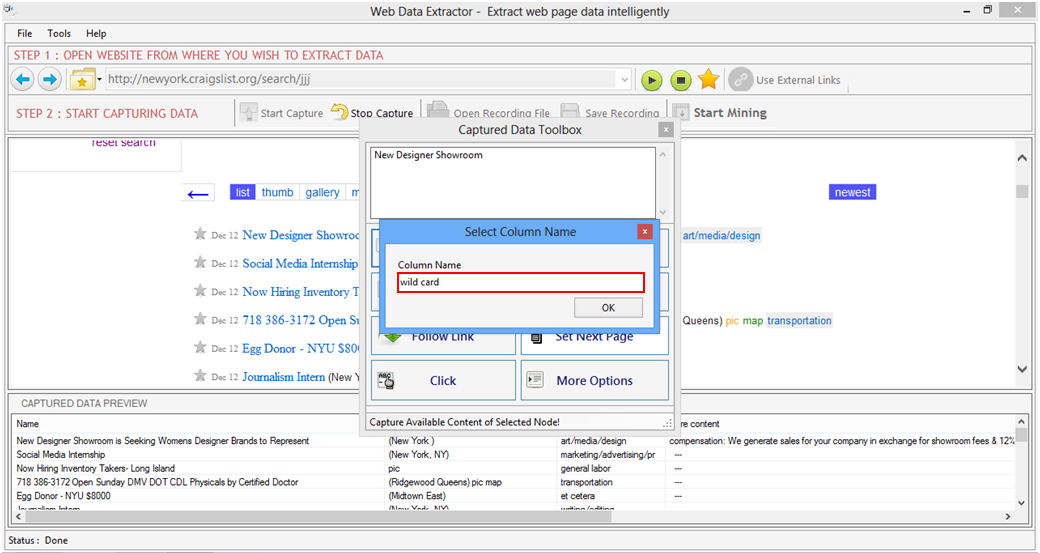

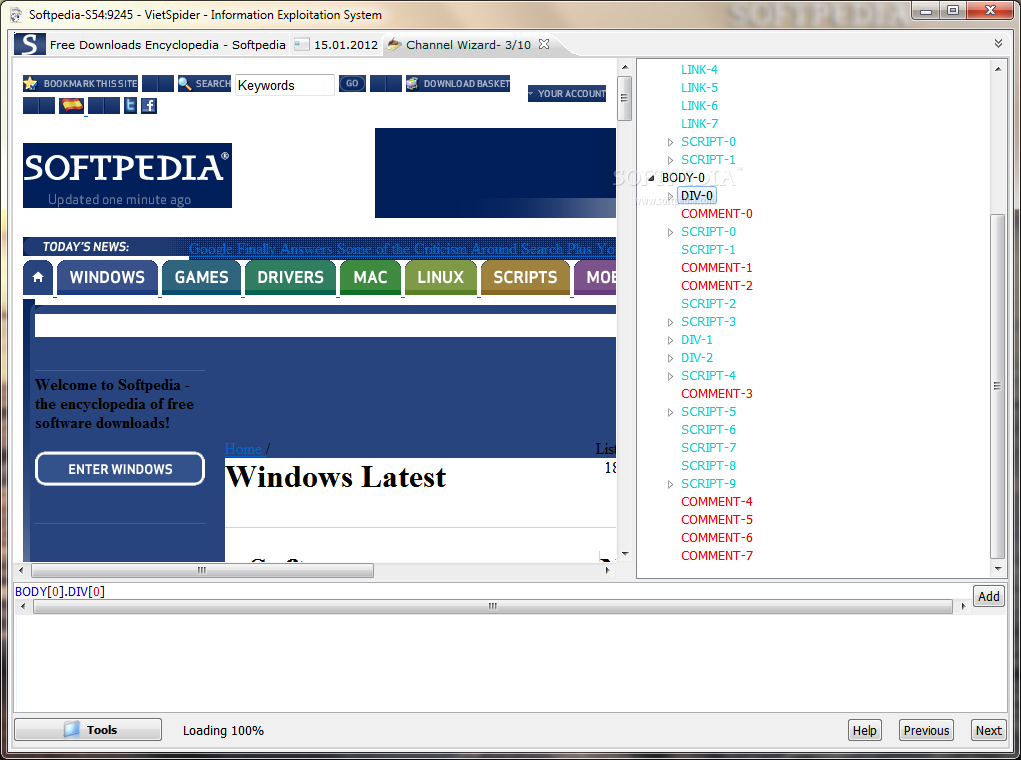

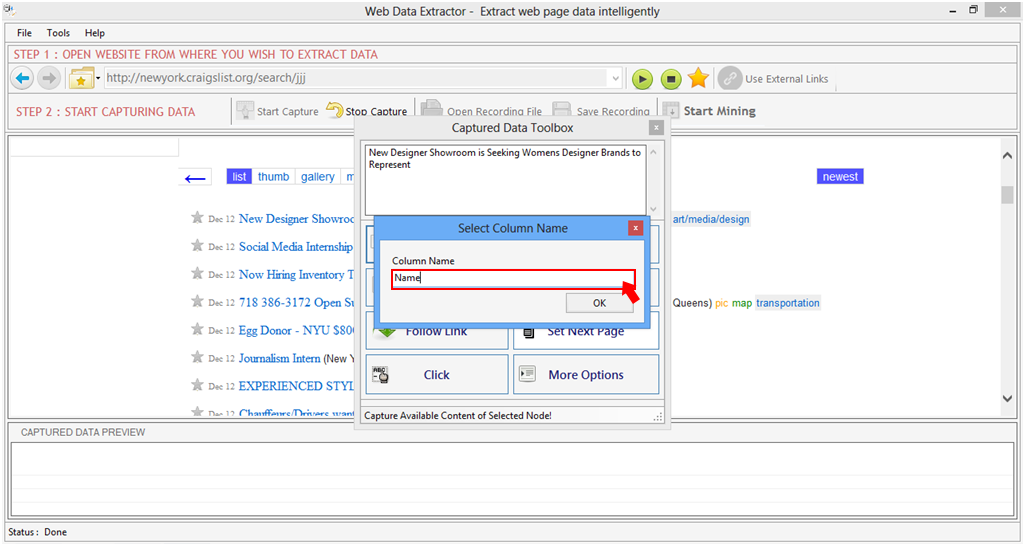

In the information extraction field, on-demand content use by the internet users is even more challenging to predict the failures. Although the application and infrastructure deployment strategies have evolved from on-premise data centers to cloud, failures continue to exist due to various unpredictable and some predictable situations. On demand web application availability anytime, anywhere has made DevOps a specialized field for the future of IT industry. Traditional Information Technology (IT) operation teams have transformed to be Site Reliability Engineering (SRE) or Development Operations (DevOps), to handle these special situations. Predicting failures in websites has never been easy, be it crashes or memory outages or incidents. A real-world case with retail website for intelligent information extraction and an offline experimentation environment is setup to demonstrate proactive failure prediction and automatic extraction resulting in high failure prediction, precision and recall of object detection and information extraction. The proactive detection using LSTM detects new location of the web page due to layout changes and enables automatic extraction of information of the new web page. The aim of this article is to build a robust and intelligent information extraction framework with the ability not only to proactively predict website failure but also automatically extract information using deep-learning techniques using You Only Look Once and Long Short-term Memory (LSTM) networks. The focus of existing machine learning-based information extraction framework is only on information extraction by using core extraction logic that is susceptible to website changes, thus missing out core features such as ability to handle proactive failure prediction and intelligent information extraction capabilities.

Inability to extract information by using traditional and machine learning techniques due to dynamic changes in website layout pose significant challenges to the technical community to keep up with such changes. The amount of information available on the internet today requires effective information extraction and processing to offer hyper-personalized user experiences.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed